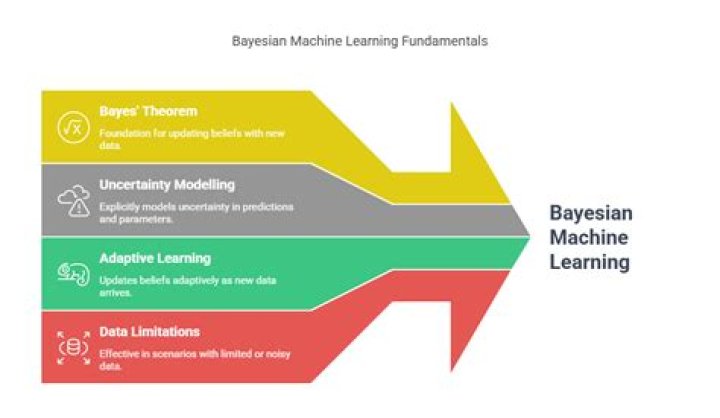

What are the advantages of Bayesian methods in machine learning?

Christopher Green

Christopher Green

In respect to this, what are the advantages of Bayesian networks?

They provide a natural way to handle missing data, they allow combination of data with domain knowledge, they facilitate learning about causal relationships between variables, they provide a method for avoiding overfitting of data (Heckerman, 1995), they can show good prediction accuracy even with rather small sample

Secondly, what are the advantages and disadvantages of naïve Bayes algorithm? When assumption of independent predictors holds true, a Naive Bayes classifier performs better as compared to other models. 2. Naive Bayes requires a small amount of training data to estimate the test data. So, the training period is less.

In this manner, is Bayesian statistics useful for machine learning?

Since Bayesian statistics provides a framework for updating "knowledge", it is in fact used a whole lot in machine learning. Having said that, totally non-Bayesian machine learning methods also exist.

Which is disadvantage of Bayesian classification?

The first disadvantage is that the Naive Bayes classifier makes a very strong assumption on the shape of your data distribution, i.e. any two features are independent given the output class. Due to this, the result can be (potentially) very bad - hence, a “naive” classifier.

Related Question Answers

How do Bayesian networks work?

Bayesian networks aim to model conditional dependence, and therefore causation, by representing conditional dependence by edges in a directed graph. Through these relationships, one can efficiently conduct inference on the random variables in the graph through the use of factors.What is Bayesian network in machine learning?

Bayesian networks. A Bayesian network is a compact, flexible and interpretable representation of a joint probability distribution. It is also an useful tool in knowledge discovery as directed acyclic graphs allow representing causal relations between variables. Typically, a Bayesian network is learned from data.Is Bayesian network a machine learning?

Bayesian networks (BN) and Bayesian classifiers (BC) are traditional probabilistic techniques that have been successfully used by various machine learning methods to help solving a variety of problems in many different domains.What do Bayesian networks predict?

Crucially, Bayesian networks can also be used to predict the joint probability over multiple outputs (discrete and or continuous). This is useful when it is not enough to predict two variables separately, whether using separate models or even when they are in the same model.What is Bayesian network with example?

A Bayesian Network (BN) is a marked cyclic graph. It represents a JPD over a set of random variables V. By using a directed graphical model, Bayesian Network describes random variables and conditional dependencies. For example, you can use a BN for a patient suffering from a particular disease.Why is there a Bayesian network?

Because a Bayesian network is a complete model for its variables and their relationships, it can be used to answer probabilistic queries about them. For example, the network can be used to update knowledge of the state of a subset of variables when other variables (the evidence variables) are observed.Where is Bayesian statistics used?

What are the applications? Simply put, in any application area where you have lots of heterogeneous or noisy data or anywhere you need a clear understanding of your uncertainty are areas that you can use Bayesian Statistics.What is Bayesian technique?

Bayesian inference is a method of statistical inference in which Bayes' theorem is used to update the probability for a hypothesis as more evidence or information becomes available. Bayesian inference is an important technique in statistics, and especially in mathematical statistics.How important is Bayesian statistics?

Bayesian analysis is perhaps best seen as a process for obtaining posterior distributions or predictions based on a range of assumptions about both prior distributions and likelihoods: arguing in this way, sensitivity analysis and reasoned justification for both prior and likelihood become vital.How do you explain Bayesian statistics?

“Bayesian statistics is a mathematical procedure that applies probabilities to statistical problems. It provides people the tools to update their beliefs in the evidence of new data.”What is Bayesian analysis used for?

Bayesian inference. Bayesian inference is a method of statistical inference in which Bayes' theorem is used to update the probability for a hypothesis as more evidence or information becomes available. Bayesian inference is an important technique in statistics, and especially in mathematical statistics.Why is Bayesian deep learning?

Bayesian deep learning It offers principled uncertainty estimates from deep learning architectures. These deep architectures can model complex tasks by leveraging the hierarchical representation power of deep learning, while also being able to infer complex multi-modal posterior distributions.Who is Bayesian?

Thomas Bayes, (born 1702, London, England—died April 17, 1761, Tunbridge Wells, Kent), English Nonconformist theologian and mathematician who was the first to use probability inductively and who established a mathematical basis for probability inference (a means of calculating, from the frequency with which an eventIs deep learning Bayesian?

Bayesian deep learning is a field at the intersection between deep learning and Bayesian probability theory. Bayesian deep learning models typically form uncertainty estimates by either placing distributions over model weights, or by learning a direct mapping to probabilistic outputs.What is the advantage of using an iterative algorithm?

Answer: The advantage of using an iterative algorithm is that it does not use much memory and it cannot be optimized. The expression power of the iterative algorithm is very much limited. Interactive method is the repetition of the loop till the desired number or the sequence is obtained by the user.What are the advantages and disadvantages of SVM?

With an appropriate kernel function, we can solve any complex problem. Unlike in neural networks, SVM is not solved for local optima. It scales relatively well to high dimensional data. SVM models have generalization in practice, the risk of over-fitting is less in SVM.Why Bayes classifier is optimal?

Since this is the most probable value among all possible target values v, the Optimal Bayes classifier maximizes the performance measure e(ˆf). As we always use Bayes classifier as a benchmark to compare the performance of all other classifiers.What are the advantages and disadvantages of logistic regression?

3. Logistic Regression not only gives a measure of how relevant a predictor (coefficient size) is, but also its direction of association (positive or negative). 4. Logistic regression is easier to implement, interpret and very efficient to train.What is the difference between Bayes and naive Bayes?

3 Answers. Naive Bayes assumes conditional independence, P(X|Y,Z)=P(X|Z), Whereas more general Bayes Nets (sometimes called Bayesian Belief Networks) will allow the user to specify which attributes are, in fact, conditionally independent.How does naive Bayes algorithm work?

Naive Bayes Classifier. Naive Bayes is a kind of classifier which uses the Bayes Theorem. It predicts membership probabilities for each class such as the probability that given record or data point belongs to a particular class. The class with the highest probability is considered as the most likely class.Is naive Bayes efficient?

Naive Bayes is one of the most efficient and effective inductive learning algorithms for machine learning and data mining. Its competitive performance in classifica- tion is surprising, because the conditional independence assumption on which it is based, is rarely true in real- world applications.What are the advantages and disadvantages of decision trees?

The reproducibility of decision tree model is highly sensitive as small change in the data can result in large change in the tree structure. The space and time complexity of decision tree model is relatively higher. Decision tree model training time is relatively more as complexity is high.Which classifier converges easily with less training data?

Naïve Bayes Classifier converges easily with less training data. Explanation: In recent years, machine learning concept has been gaining famous among data scientists.What is random forest in machine learning?

Random forests or random decision forests are an ensemble learning method for classification, regression and other tasks that operate by constructing a multitude of decision trees at training time and outputting the class that is the mode of the classes (classification) or mean prediction (regression) of the individualWhy naive Bayes is a bad estimator?

On the flip side, although naive Bayes is known as a decent classifier, it is known to be a bad estimator, so the probability outputs from predict_proba are not to be taken too seriously.Why is naive Bayes good for text classification?

Since a Naive Bayes text classifier is based on the Bayes's Theorem, which helps us compute the conditional probabilities of occurrence of two events based on the probabilities of occurrence of each individual event, encoding those probabilities is extremely useful.How do you train a Naive Bayes classifier?

Here's a step-by-step guide to help you get started.- Create a text classifier.

- Select 'Topic Classification'

- Upload your training data.

- Create your tags.

- Train your classifier.

- Change to Naive Bayes.

- Test your Naive Bayes classifier.

- Start working with your model.