What is the difference between the cost function and the loss function for logistic regression?

Owen Barnes

Owen Barnes

Beside this, what is the cost function of logistic regression?

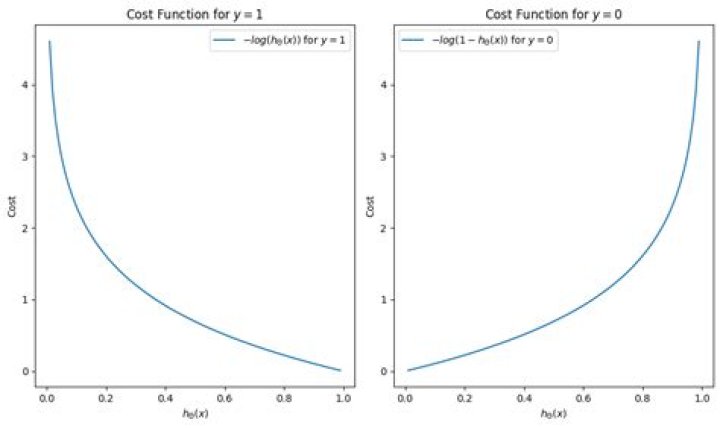

For logistic regression, the Cost function is defined as: −log(hθ(x)) if y = 1. −log(1−hθ(x)) if y = 0. Cost function of Logistic Regression. Graph of logistic regression.

Furthermore, what is the loss function for logistic regression? Loss function for Logistic Regression is the data set containing many labeled examples, which are pairs. is the label in a labeled example. Since this is logistic regression, every value of must either be 0 or 1. is the predicted value (somewhere between 0 and 1), given the set of features in .

Beside above, what is the difference between loss function cost function and objective function?

Loss vs. Error Function. "The function we want to minimize or maximize is called the objective function, or criterion. The cost function is used more in optimization problem and loss function is used in parameter estimation.

What is loss function in neural network?

Loss Functions. A loss function is used to optimize the parameter values in a neural network model. Loss functions map a set of parameter values for the network onto a scalar value that indicates how well those parameter accomplish the task the network is intended to do.

Related Question Answers

What is logistic regression good for?

Logistic regression is the appropriate regression analysis to conduct when the dependent variable is dichotomous (binary). Logistic regression is used to describe data and to explain the relationship between one dependent binary variable and one or more nominal, ordinal, interval or ratio-level independent variables.What is the logistic growth function?

function ƒ(x) increases without bound as. x increases. On the other hand, the logistic growth. function y = has y = c as an upper bound. Logistic growth functions are used to model real-life quantities whose growth levels off because the rate of growth changes—from an increasing growth rate to a decreasing growth rate.How do you explain logistic regression?

Logistic Regression, also known as Logit Regression or Logit Model, is a mathematical model used in statistics to estimate (guess) the probability of an event occurring having been given some previous data. Logistic Regression works with binary data, where either the event happens (1) or the event does not happen (0).What are the assumptions of logistic regression?

Basic assumptions that must be met for logistic regression include independence of errors, linearity in the logit for continu- ous variables, absence of multicollinearity, and lack of strongly influential outliers.What is weight in logistic regression?

The interpretation of the weights in logistic regression differs from the interpretation of the weights in linear regression, since the outcome in logistic regression is a probability between 0 and 1. The weighted sum is transformed by the logistic function to a probability.What is logistic regression in ML?

Logistic regression is a supervised learning classification algorithm used to predict the probability of a target variable. It is one of the simplest ML algorithms that can be used for various classification problems such as spam detection, Diabetes prediction, cancer detection etc.How do you do logistic regression?

Use multiple logistic regression when the dependent variable is nominal and there is more than one independent variable. It is analogous to multiple linear regression, and all of the same caveats apply. Use linear regression when the Y variable is a measurement variable.What is the difference between linear regression and logistic regression?

In linear regression, the outcome (dependent variable) is continuous. It can have any one of an infinite number of possible values. In logistic regression, the outcome (dependent variable) has only a limited number of possible values. Logistic regression is used when the response variable is categorical in nature.What is standard normal loss function?

F(Z) is the probability that a variable from a standard normal distribution will be less than or equal to Z, or alternately, the service level for a quantity ordered with a z-value of Z. L(Z) is the standard loss function, i.e. the expected number of lost sales as a fraction of the standard. deviation.What are the different loss functions?

There are several different common loss functions to choose from: the cross-entropy loss, the mean-squared error, the huber loss, and the hinge loss – just to name a few.” Some Thoughts About The Design Of Loss Functions (Paper) – “The choice and design of loss functions is discussed.What is the difference between loss and cost function?

Generally, a cost function is a measure of how far the model is, from ability of estimating the exact relationship between X and Y. And loss function is defined on a data point, prediction and label, and measures the penalty. The loss function computes the error for a single training example.How does loss function work?

What's a Loss Function? At its core, a loss function is incredibly simple: it's a method of evaluating how well your algorithm models your dataset. If your predictions are totally off, your loss function will output a higher number. If they're pretty good, it'll output a lower number.What does loss function do?

Loss function. From Wikipedia, the free encyclopedia. In mathematical optimization and decision theory, a loss function or cost function is a function that maps an event or values of one or more variables onto a real number intuitively representing some "cost" associated with the event.Can loss function negative?

3 Answers. In general a cost function can be negative. Many loss or cost functions are designed with an absolute minimum of 0 possible for "no error" results. In supervised learning that is often a simple consequence of basing the cost on the difference between the model outputs and desired outputs.What is loss function in deep learning?

Loss functions and optimizations. Machines learn by means of a loss function. It's a method of evaluating how well specific algorithm models the given data. If predictions deviates too much from actual results, loss function would cough up a very large number.What is cost function in neural network?

Introduction. A cost function is a measure of "how good" a neural network did with respect to it's given training sample and the expected output. It also may depend on variables such as weights and biases. A cost function is a single value, not a vector, because it rates how good the neural network did as a whole.Can cost function be negative?

In general a cost function can be negative. The more negative, the better of course, because you are measuring a cost the objective is to minimise it. A standard Mean Squared Error function cannot be negative. The lowest possible value is 0, when there is no output error from any example input.Which method gives the best fit for logistic regression model?

Just as ordinary least square regression is the method used to estimate coefficients for the best fit line in linear regression, logistic regression uses maximum likelihood estimation (MLE) to obtain the model coefficients that relate predictors to the target.Why logistic regression is called regression?

Logistic regression is regression because it finds relationships between variables. It is logistic because it uses logistic function as a link function. Hence the full name.What is two class logistic regression?

About logistic regression Logistic regression is a well-known method in statistics that is used to predict the probability of an outcome, and is especially popular for classification tasks. The algorithm predicts the probability of occurrence of an event by fitting data to a logistic function.Why do we use sigmoid and not any increasing function from 0 to 1?

The Sigmoid Function curve looks like a S-shape. The main reason why we use sigmoid function is because it exists between (0 to 1). Therefore, it is especially used for models where we have to predict the probability as an output. The function is monotonic but function's derivative is not.How does Python implement logistic regression?

Let's start implementing logistic regression in Python!Logistic Regression in Python With StatsModels: Example

- Step 1: Import Packages. All you need to import is NumPy and statsmodels.api :

- Step 2: Get Data. You can get the inputs and output the same way as you did with scikit-learn.

- Step 3: Create a Model and Train It.

Why we use log in logistic regression?

log(p/1-p) is the link function. Logarithmic transformation on the outcome variable allows us to model a non-linear association in a linear way. This is the equation used in Logistic Regression. Here (p/1-p) is the odd ratio.What is score in logistic regression?

The logistic probability score function allows the user to obtain a predicted probability score of a given event using a logistic regression model. The logistic probability score works by specifying the dependent variable (binary target) and independent variables as input. Where p is the probability of the event (Y).How do you prove a loss function is convex?

To quote Wikipedia's convex function article: "If the function is twice differentiable, and the second derivative is always greater than or equal to zero for its entire domain, then the function is convex." If the second derivative is always greater than zero then it is strictly convex.How do neural networks reduce loss?

Solutions to this are to decrease your network size, or to increase dropout. For example you could try dropout of 0.5 and so on. If your training/validation loss are about equal then your model is underfitting. Increase the size of your model (either number of layers or the raw number of neurons per layer)What is a good loss value?

Loss value implies how well or poorly a certain model behaves after each iteration of optimization. Ideally, one would expect the reduction of loss after each, or several, iteration(s). For example, if the number of test samples is 1000 and model classifies 952 of those correctly, then the model's accuracy is 95.2%.What is Backpropagation and how does it work?

Backpropagation is a short form for "backward propagation of errors." It is a standard method of training artificial neural networks. This method helps to calculate the gradient of a loss function with respects to all the weights in the network.Why do we lose hinges?

In machine learning, the hinge loss is a loss function used for training classifiers. The hinge loss is used for "maximum-margin" classification, most notably for support vector machines (SVMs).What is validation loss?

The loss is calculated on training and validation and its interpretation is based on how well the model is doing in these two sets.It is the sum of errors made for each example in training or validation sets. It is the measure of how accurate your model's prediction is compared to the true data.What is Backpropagation in neural network?

Backpropagation, short for "backward propagation of errors," is an algorithm for supervised learning of artificial neural networks using gradient descent. Given an artificial neural network and an error function, the method calculates the gradient of the error function with respect to the neural network's weights.How do I stop Overfitting?

How to Prevent Overfitting- Cross-validation. Cross-validation is a powerful preventative measure against overfitting.

- Train with more data. It won't work every time, but training with more data can help algorithms detect the signal better.

- Remove features.

- Early stopping.

- Regularization.

- Ensembling.